Club JK / jkcybersecurity

Monday, March 30, 2026

The Meta Verdict Actually Matters

Monday, March 9, 2026

AI Agents Are Having Their Moment of Truth — And It is Ugly

Gartner says AI is in the Trough of Disillusionment throughout 2026. Translation: the hype is crashing into reality, and companies are waking up to the fact that their AI projects are not delivering.

The numbers are brutal. Gartner forecasts $2.5 trillion in AI spending this year — up 44% year over year. But here is the kicker: 90% of AI projects fail. That is not a typo. Nine out of ten AI initiatives flame out.

What Went Wrong

The promise was easy. Deploy AI, save money, break things. The reality is messier.

Most companies jumped in without asking hard questions. They bought the tooling, hired the consultants, built the proof of concept, and then hit the wall. Integration with existing systems. Data quality issues. Governance and compliance. The boring stuff that nobody wanted to talk about at the conference.

AI agents made it worse. The idea of autonomous agents — software that could reason, plan, execute — was intoxicating. Vendors promised the moon. The reality? Hallucinations, security gaps, and agents that could not reason their way out of a paper bag.

Why Agents Specifically Are Struggling

Here is the thing about AI agents: they are only as good as their context. A chat bot can wing it. An agent making real decisions in your infrastructure? That is a different story.

The problems:

- Reliability: Agents drift. They take unexpected paths. They hallucinate actions they never took.

- Security: Giving an agent access to your systems means giving an agent access to your systems. The attack surface is massive.

- Governance: Whoops when the agent does something dumb? That is your job.

- Cost: Running agents at scale burns compute. Fast.

The Good News

The Trough is not the end. It is the correction.

Every major technology went through this. Cloud computing. Containers. Kubernetes. The survivors figured out what actually works and built real businesses on top of it.

For AI agents, that means:

- Narrow use cases beat broad ambition. Do not try to replace your entire workforce. Find one specific task and solve it.

- Human in the loop is features, not bugs. Agents that suggest, humans that decide. That is the model that works now.

- The boring stuff matters. Data quality, integration, monitoring. The unsexy stuff is what separates winners from the 90% who fail.

What to Do

If you are building with AI agents right now:

- Start small. One process. One domain. Prove it works before you scale.

- Budget for the boring stuff. You will spend more time on integration than on the model itself.

- Keep humans in the loop. Until the technology matures, that is how you avoid catastrophe.

- Treat agents as augmentation, not replacement. They are tools. Use them as such.

The Trough of Disillusionment is when the pretenders leave and the real builders stay. If you are still here, you are ahead of the game.

The question is not whether AI agents will work. It is whether you will be one of the ones who figured out how to make them work. Let me know if you want to dig into specific implementation patterns.

Friday, March 6, 2026

I have been running a one-person cybersecurity practice for years. Then I discovered what happens when you pair an LLM with the right infrastructure.

This is my setup.

What I am Running

Three machines in my homelab:

- Mac mini (basement): Runs OpenClaw, my Telegram bot, automated agents

- Kraken: 4-GPU rig at

for heavy compute - Kali VM: Penetration testing playground at

The Mac mini handles the lightweight stuff — scheduling, messaging, orchestration. Kraken kicks in when I need GPU acceleration for model inference or training. The Kali VM is where I break things.

The Brains: OpenClaw + Claude

OpenClaw is an agent framework that gives me:

- A persistent agent I can message on Telegram

- Sub-agents I can spawn for parallel tasks

- Browser automation

- File system access

- MCP server integration

I talk to it like a person. Run a pen test on X. It figures out the tools, executes, reports back.

Here is what makes it different from just using Claude in the browser:

- Persistence: The agent remembers context across conversations

- Tool access: It can execute commands, not just suggest them

- Automation: I can schedule recurring tasks (my daily AI pentesting research runs every morning)

- MCP servers: I bolted on security tools directly

MCP Servers: The Force Multipliers

Model Context Protocol lets me connect AI directly to tools. My current setup:

- Metasploit: Automated vulnerability scanning

- Kali Linux: Full pen test toolkit

- Burp Suite: Web app testing

- OWASP ZAP: Automated DAST

When I tell the agent to check this URL for vulnerabilities, it spins up Burp, runs scans, parses results, and hands me a report. I do not touch the tools manually anymore.

The workflow is:

User → Telegram message → OpenClaw → Claude → MCP → Tool → Result → Telegram response

Total elapsed time: usually under a minute for basic tasks.

What Actually Happens

Let me give you a real example.

Yesterday I needed to test a client is web app. I typed:

Run a quick pen test on client-site.com, focus on OWASP Top 10

The agent:

- Spawned a sub-agent

- Fired up OWASP ZAP in passive mode

- Kicked off a Nmap scan

- Cross-referenced open ports with known exploits

- Returned a prioritized finding list in about 45 seconds

Was it as thorough as a manual engagement? No. But it found three medium-severity issues I would have missed doing it manually. And it cost me zero extra effort.

The Numbers

- Monthly AI spend: Around $200-300 in API calls (Claude + Grok)

- Time saved: Hard to quantify, but I would guess 10-15 hours/week on repetitive tasks

- Tasks automated: Daily threat intel, vulnerability scanning, report drafting, Slack/Telegram notifications

What I would Do Different

If you are building this:

- Start small: Do not try to automate everything. Pick one repetitive task and solve that first.

- Do not cheap out on the LLM: The $20/month Claude subscription pays for itself in an hour. The reasoning quality difference between cheap and premium models is enormous.

- Home lab > cloud: I run everything local. Kraken has 4 GPUs I use for model fine-tuning. Total electric bill: maybe $150/month. Compare that to AWS and it is not close.

- MCP is the key: The integration layer matters more than the model. The better your tool connections, the more the AI can actually do.

The Point

I am a one-person shop. I do not have a team. I do not have a SOC. I do not have a devops department.

What I have is an agent that never sleeps, never complains, and can spin up a Metasploit session faster than I can remember the syntax.

The future of solo operators is not about working harder. It is about building better systems.

Want details on any specific piece? Hit me up.

Wednesday, March 4, 2026

The Pope Just Said What Everyone's Thinking About AI

Pope Leo XIV went to Rome last week and told Catholic priests to stop using ChatGPT to write their sermons. That's the leader of 1.4 billion people saying AI can't replace the real thing.

"To give a true homily is to share faith, and AI will never be able to share faith."

That's a direct quote. No fluff, no hedging. The Pope looked at what priests were doing and said cut it out.

And here's the thing—he's right.

I don't care if you're religious or not. The point isn't the theology. It's that the Pope clocked something most people in the AI hype bubble won't admit: there's a difference between generating words and having something to say.

The Roggin Report tested an AI-generated sermon. Panelists said it lacked something. They couldn't quite name it, but they knew it was missing. That's the problem with AI writing—it's technically correct, structurally sound, and completely empty. It reads like a sermon. It sounds like a sermon. But it's not a sermon. It's a rough draft that pretends to have a soul.

The Pope also called out TikTok. Said chasing likes and followers is an "illusion" that passes for spiritual connection. Guy is 85 years old and figured out what most influencers haven't: you can have a million followers and still be alone.

Look, I'm not here to tell you AI is bad. I use it. I write with it. But there's a difference between using a tool and substituting it for the real work. A priest who lets ChatGPT write their Sunday talk is skipping the hard part—the reflection, the struggle, the actual engagement with the text. That's not a sermon. That's a paraphrase.

The Pope gets it. Maybe more people should listen.

References

Monday, March 2, 2026

The 27-Second Breakout: How AI-Enabled Adversaries Are Rewriting the Rules of Cyberwarfare

When malware becomes optional and speed becomes the weapon of choice

The Numbers That Should Wake You Up

CrowdStrike's 2026 Global Threat Report dropped last week, and the statistics are sobering. We're not looking at incremental change—we're looking at a fundamental shift in how attackers operate.

The headline figures:

- 89% increase in attacks by AI-enabled adversaries

- 82% of detections in 2025 were malware-free

- 29 minutes average breakout time (down 65% from 2024)

- 27 seconds—the fastest observed breakout

Let that last number sink in. Twenty-seven seconds from initial access to lateral movement. That's not enough time to finish a sip of coffee, let alone mount an effective response.

The Rise of the "Evasive Adversary"

What CrowdStrike calls the "evasive adversary" represents a new breed of threat actor—one that doesn't need to drop malware to achieve their objectives. Instead, they're "living off the land," using legitimate tools and native system capabilities to blend into normal operations.

This isn't new in concept. PowerShell-based attacks and LOLBins (Living Off the Land Binaries) have been around for years. What's changed is the scale and sophistication that AI enables.

How AI Changes the Game

1. Automated Reconnaissance at Scale

Traditional attackers might spend days or weeks mapping a network. AI-enabled adversaries can analyze network topology, identify high-value targets, and map privilege escalation paths in minutes. The reconnaissance phase that once took a human team weeks now happens in the time it takes to brew coffee.

2. Adaptive Evasion Techniques

Machine learning models can analyze defensive patterns in real-time and adjust tactics accordingly. If one approach triggers an alert, the AI pivots instantly—testing variations until it finds a path that works. It's like playing chess against an opponent who can simulate a million moves per second.

3. Hyper-Personalized Social Engineering

AI-generated phishing has moved beyond clumsy grammar errors and generic templates. Today's AI can scrape social media, analyze communication patterns, and craft messages that mimic the writing style of colleagues, executives, or trusted vendors. The Nigerian prince has been replaced by a convincing facsimile of your CFO.

4. Malware-Free Persistence

Why drop a payload when you can use the tools already installed? AI agents can identify and abuse legitimate remote access tools, cloud services, and administrative utilities. The activity looks normal because it is normal—just weaponized.

Why Traditional Defenses Are Failing

The cybersecurity industry has spent decades building defenses around a simple model: detect the malware, block the malware, analyze the malware. But when 82% of attacks don't use malware, that model breaks down.

The Signature Problem

Signature-based detection—whether for files, network traffic, or behaviors—relies on knowing what to look for. AI-enabled adversaries generate unique approaches for each target. By the time a signature exists, the attack has already succeeded.

The Speed Gap

The average SOC takes 197 days to identify a breach. AI-enabled adversaries achieve their objectives in under 30 minutes. We're not just behind—we're operating in different time zones.

The Alert Fatigue Trap

Security teams are drowning in false positives. When everything generates an alert, analysts become desensitized. AI-enabled attackers exploit this by crafting attacks that generate just enough noise to blend in, but not enough to trigger immediate escalation.

Building a Defense for the AI Era

If we can't out-speed the attackers, we need to out-smart them. Here's what effective defense looks like in 2026:

1. Behavioral Detection Over Signature Matching

Stop looking for malware and start looking for anomalies. Baseline normal behavior for users, systems, and networks. When someone accesses resources they've never touched, at unusual times, from unexpected locations—that's your signal.

Key capabilities:

- User and Entity Behavior Analytics (UEBA)

- Network traffic analysis with ML-powered anomaly detection

- Privileged access monitoring with context-aware alerting

2. Assume Breach, Detect Fast

The 27-second breakout tells us that prevention alone is insufficient. Design your architecture assuming compromise will happen. Focus on:

- Micro-segmentation: Limit lateral movement opportunities

- Zero Trust: Verify every access request, every time

- Deception technology: Honeypots and honeytokens that trigger high-fidelity alerts

3. Automate the Response

If attackers use AI for speed, defenders must match it. Manual incident response processes that take hours or days are no longer viable.

Automated response capabilities:

- Isolate compromised endpoints within seconds

- Revoke sessions and credentials automatically

- Dynamic firewall rules based on threat intelligence

- SOAR playbooks for common attack patterns

4. Threat Hunting, Not Just Monitoring

Passive monitoring waits for alerts. Threat hunting proactively searches for indicators of compromise that evaded detection.

Hunting hypotheses to explore:

- Users accessing cloud resources outside business hours

- Administrative tools executed by non-admin accounts

- Unusual data transfer volumes to external destinations

- PowerShell execution with encoded commands

5. Adversarial AI for Defense

Fight fire with fire. Deploy AI systems that:

- Generate synthetic attack scenarios for testing defenses

- Predict attacker paths based on network topology

- Automatically correlate disparate events into attack chains

- Continuously adapt detection models based on new threat intelligence

The Human Element

Technology alone won't save us. The most critical defense is a well-trained team that understands:

- What AI-enabled attacks look like in practice

- How to investigate without relying on malware signatures

- When to escalate based on behavioral indicators

- How to respond under time pressure

Invest in continuous training. Run tabletop exercises with realistic scenarios. Build muscle memory for the 27-second reality.

Looking Ahead

The 89% increase in AI-enabled attacks isn't a spike—it's the new baseline. As AI tools become more accessible and sophisticated, the barrier to entry for advanced attacks continues to drop.

We're entering an era where the question isn't "if" you'll face an AI-enabled adversary, but "when." And when that moment comes, you'll have 29 minutes—or less—to respond.

The defenders who thrive in this environment won't be the ones with the most tools or the biggest budgets. They'll be the ones who adapted their thinking, their processes, and their technology to match a threat that moves at machine speed.

The 27-second breakout is a wake-up call. The question is: are you listening?

Resources for Deeper Dive

- CrowdStrike 2026 Global Threat Report

- MITRE ATT&CK Framework: Living Off the Land

- CISA: Defending Against Malware-Free Intrusions

John Kennedy is a cybersecurity professional with 34 years of military experience in information warfare and 9 years in civilian penetration testing and security assessment. He writes about the intersection of AI, cloud security, and modern threat landscapes.

Friday, November 9, 2018

https://youtu.be/YoNrNBnmwuY

Friday, October 20, 2017

SLAE64 Exam - Assignment 7 of 7 (Cryptor)

SLAE64 - 1501

Success in these 7 assignments results in the Pentester Academy's SLAE64 certification.

http://www.securitytube-training.com/online-courses/x8664-assembly-and-shellcoding-on-linux/index.html

All 3 files used in this assignment are here:

https://github.com/clubjk/SLAE64-3/tree/master/exam/cryptorAssignment:

I chose an AES encryption script created by Blu3Gl0w13. Check out his excellent blog here.

I elected to use the execve-stack shellcode as a base for this assignment. I extracted its shellcode using a modified objdump command.

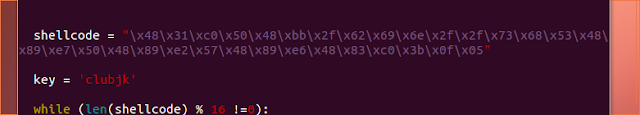

I pasted the shellcode into encryptor.py as well as choosing a key of 'clubjk'.

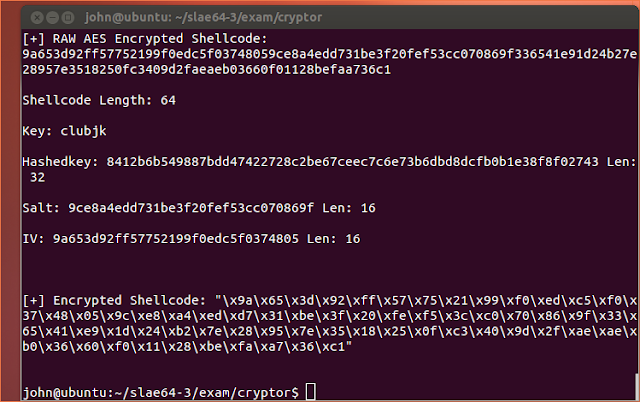

I executed the script and it outputted encrypted shellcode.

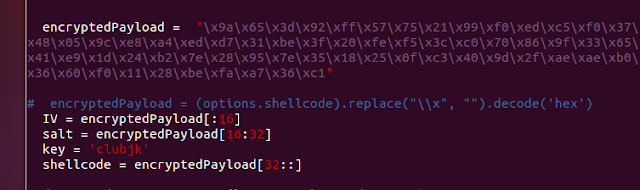

I pasted this encrypted shellcode in decryptor.py as well as adding the key of 'clubjk'.

I executed the encryptor.py and the decrypted execve-stack shellcode executed uneventfully.

It worked. Yay.

Files used in this assignment:

execve-stack.nasm

encryptor.py

decryptor.py

All are here:

https://github.com/clubjk/SLAE64-3/tree/master/exam/cryptor